Ethical AI Practices for Upwork Agencies

AI can make your agency faster, but only if used responsibly. Upwork agencies are leveraging AI for tasks like job matching, proposal drafting, and performance tracking. However, misuse – such as automated submissions or biased algorithms – can lead to policy violations, reputational damage, or even account suspensions.

To stay compliant and ethical, follow these principles:

- Human Oversight: AI can assist, but humans must review and approve all actions, especially when using AI proposal tools.

- Avoid Automation Pitfalls: No auto-submitting proposals, scraping data, or mass messaging clients.

- Mitigate Bias: Regularly audit training data to prevent biased AI decisions that harm outcomes.

- Protect Data: Anonymize sensitive client information and avoid tools that require Upwork credentials.

- Be Transparent: Always disclose when AI is used in content or decision-making.

Agencies that balance AI efficiency with ethical practices build trust, comply with Upwork policies, and avoid risks like data breaches or biased outputs. By integrating these steps into your workflow, you can scale operations effectively while maintaining integrity.

The CEO of Upwork: AI is Creating More Jobs Than it Kills

sbb-itb-5c5517e

Following Upwork Rules with AI Tools

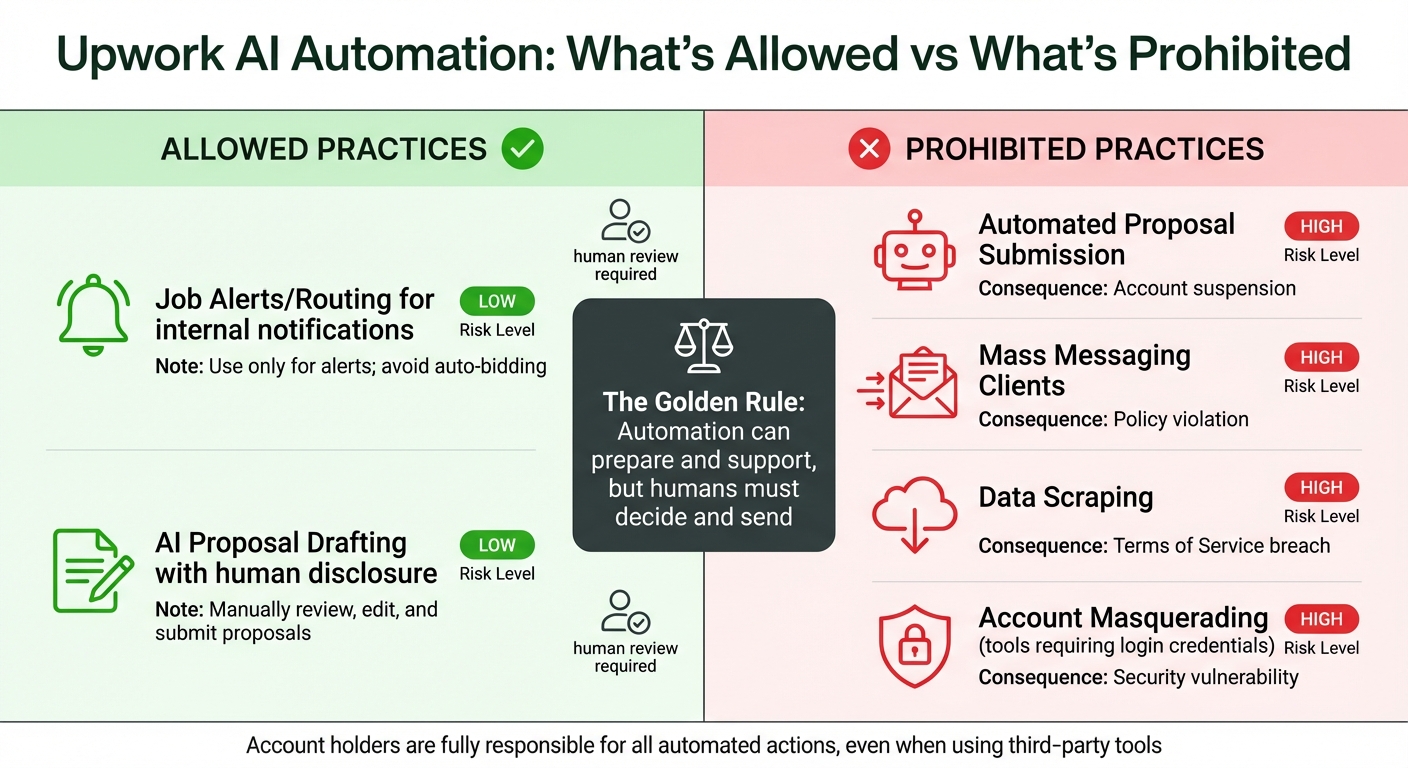

Upwork AI Automation Rules: Allowed vs Prohibited Practices

Upwork’s automation policy is built around a simple yet crucial rule: every automated action must be reviewed and approved by a human. AI can assist in tasks like preparing proposals, matching jobs, or analyzing performance metrics, but it cannot independently submit bids, send messages, or interact with clients. This "human-in-the-loop" approach is designed to prevent spam and ensure that agencies remain in control of client interactions.

The line between acceptable and prohibited activities is clear. Tools that send job alerts (via Slack or email), draft proposals for human review, or track internal metrics are allowed because they assist decision-making. On the other hand, automated proposal submissions, mass messaging to clients, or scraping profile data are not allowed. Even if these actions are carried out by third-party tools, account holders are still fully responsible for any violations.

What Upwork Allows for Automation

Upwork encourages the use of AI tools that enhance human work rather than replace it. For example, job alert systems can notify your team about new project matches, and AI can draft proposals based on your portfolio and past work. However, the final decision and submission must always involve human review. Similarly, internal automations like updating CRM pipelines, tracking response rates, or organizing tasks are acceptable as long as they stay off the Upwork platform.

Transparency is key: disclose to clients when content or proposals are AI-generated to build trust. This aligns with Upwork’s core principle – AI supports, but humans remain in charge. Warning signs like unusually high proposal rates, repeated "Are you human?" prompts, or tools requiring direct login credentials can indicate policy violations. If these occur, it’s time to reassess your workflow.

Case Study: An Agency Using AI the Right Way

In September 2025, Marcus Grimm, a marketing automation consultant, shared how integrating AI into his Upwork workflow increased productivity by 30%–40%. He relied on AI for tasks like market research, drafting initial proposals, and analyzing performance. However, every AI-generated proposal was manually reviewed to ensure it met client needs and upheld quality standards. This balanced approach enabled his agency to scale operations while staying compliant with Upwork’s rules. His experience highlights the importance of maintaining human oversight when leveraging AI tools for Upwork.

Comparison Table: Following vs. Breaking the Rules

Here’s a quick reference table that outlines practices allowed by Upwork versus those that violate its policies:

| Practice | Upwork Rule | Risk Level | Mitigation Strategy |

|---|---|---|---|

| Job Alerts/Routing | Allowed for internal notifications | Low | Use only for alerts; avoid auto-bidding |

| AI Proposal Drafting | Allowed with human disclosure | Low | Manually review, edit, and submit proposals |

| Automated Proposal Submission | Prohibited | High | Disable auto-submit features; require manual approval |

| Mass Messaging Clients | Prohibited | High | Ensure human intervention before outreach |

| Data Scraping | Prohibited | High | Use official APIs or conduct manual research |

| Account Masquerading | Prohibited | High | Avoid tools requiring direct login credentials |

While AI tools can significantly streamline operations, human oversight remains essential to ensure compliance and maintain ethical standards. As Vadym Ovcharenko of GigRadar aptly puts it:

"The safest way to think about it: automation can prepare and support, but humans must decide and send".

Main Ethical Issues to Address

While following Upwork’s automated action policies is a must, agencies also need to tackle bigger ethical concerns when using AI for Upwork lead generation. These include bias in decision-making, data privacy risks, and transparency gaps. If ignored, these issues could harm your reputation, expose you to legal trouble, or negatively impact those interacting with your AI systems.

Removing Bias from AI Models

AI systems are only as good as the data they’re trained on. If the training data is biased, the AI’s decisions will reflect that bias. A study by the London School of Economics found that biased training data resulted in systematic gender bias in AI outputs, highlighting the importance of using diverse data and fairness constraints. Similarly, the ongoing case Mobley v. Workday (March 2025) involves allegations of racial, age, and disability bias in AI-powered hiring tools, showing how real-world consequences can arise from biased algorithms.

For Upwork agencies, bias can creep into lead scoring or job matching. For example, if your AI model is trained mainly on successful proposals from a specific demographic, it might unfairly prioritize certain candidates or opportunities.

"AI systems are only as fair as the data they’re trained on. When algorithms rely on skewed datasets, the result can be biased AI decision-making that reinforces existing inequalities." – The Upwork Team

To tackle this issue, audit your training data regularly to identify and address imbalances. Use datasets that represent a variety of backgrounds, industries, and project types. Incorporate fairness constraints – mathematical rules that limit biased outcomes – into your algorithms. Monitor how your AI performs across different demographic groups and adjust when disparities arise. And don’t forget the human touch – regular oversight is key to catching biases that the AI might miss. This kind of proactive approach also strengthens your data security practices.

Protecting Data Privacy and Security

Using client information in AI tools comes with risks. For example, in 2025, Scale AI – a company working with Meta and Google – accidentally exposed sensitive contractor data, including email addresses and pay details, through publicly accessible links. This breach led to major clients halting their collaborations and triggered an internal review.

For agencies, the risks are just as serious. Client job descriptions, budgets, and project details are often confidential. If your AI tool stores or uses this data for training, you could unintentionally leak sensitive information or violate privacy laws like GDPR or CCPA.

To avoid these pitfalls, anonymize all inputs before feeding them into AI systems. Strip out client names, company identifiers, and specific project details unless they’re absolutely necessary. Encrypt data during transfer and storage, and avoid using third-party tools that require your Upwork login credentials, as this can create security vulnerabilities and breach platform policies. Regularly review your data-handling practices to ensure they comply with current legal standards. Keeping data secure is a critical first step toward building trust and transparency.

Making AI Decisions Clear and Transparent

Many AI models operate like black boxes, making decisions without revealing how they arrived at them. This lack of transparency can make it difficult to verify if decisions are fair, accurate, or ethical.

"If stakeholders can’t interpret how an AI tool reached a conclusion, it’s nearly impossible to verify whether that decision aligns with ethical principles or even legal standards." – The Upwork Team

Transparency is essential for building trust with clients and safeguarding your agency from liability. Use explainability tools to help uncover how your AI systems make decisions. Build traceability into your workflows so every AI decision can be audited. Clearly label all AI-generated content – whether it’s a proposal draft, job alert, or performance analysis – so that both team members and clients know its origin.

Regular audits are a must. Conduct quarterly ethical reviews with your data team, developers, and other stakeholders to identify weaknesses in your algorithms early. Having a diverse development team can also help uncover blind spots in decision-making. Transparency isn’t just about meeting compliance requirements – it’s about earning trust and building a reputation for integrity, which ultimately strengthens your agency’s position in the long run.

How to Keep Humans in Control

While AI can draft proposals, a human must always review, edit, and send each one to comply with Upwork’s Terms. Automatically submitting proposals in bulk is considered spam and can lead to permanent account suspension. As mentioned earlier, transparency and accountability are non-negotiable – AI can assist, but humans must make the final decisions and actions. This distinction underscores why human involvement is essential.

Why Humans Must Review AI Proposals

AI-generated drafts often miss critical details that could make or break a proposal. For example, a vague client brief might hide a "scope trap", or subtle budget cues could go unnoticed without human judgment. By reviewing proposals, humans can catch these nuances and avoid triggering Upwork’s spam filters.

Agencies using a human-in-the-loop approach typically achieve interview rates between 10% and 25%. With AI-assisted drafting, the time spent on each proposal drops to 2–3 minutes (including human review), compared to the 10–20 minutes required for manual drafting. However, the responsibility for every action taken by automation tools lies fully with the agency. Upwork does not accept "vendor error" as an excuse for violating its Terms of Service. This means every proposal, message, and follow-up must be reviewed, edited, and approved by a human before being sent.

Being Clear About AI Usage

Keep a log of all AI-assisted proposals to enable quick tracking and client responses. Incorporate safe automation workflows into your Standard Operating Procedures (SOPs) and perform quarterly compliance audits to ensure every tool aligns with Upwork’s policies. This practice ensures traceability and accountability.

It’s also crucial to monitor what data your AI tools are processing and where that data is stored. Sensitive information, such as client job descriptions, budgets, or project details, could be exposed if AI tools are not properly managed. This highlights the importance of vigilance at every stage.

Oversight Framework Options

To maintain compliance, it’s essential to establish a clear framework that defines when AI should assist and when human oversight is required. The table below outlines how responsibilities can be divided for common lead generation tasks:

| AI Task | Human Role | Tools Used | Benefits |

|---|---|---|---|

| Proposal generation | Review, edit, and approve | AI writing assistants | Ensures quality and compliance with Terms of Service |

| Job matching automation | Validate recommendations | AI dashboards, RSS feeds | Reduces irrelevant matches |

| Context extraction | Verify deliverables and risks | LLM-based parsers | Avoids scope creep and misinterpretations |

| Follow-up sequences | Personalize messages | CRM, Slack bots | Builds trust with tailored insights |

Focus your automation efforts on internal tasks such as managing CRM pipelines, running analytics dashboards, and maintaining template libraries. These workflows operate outside of Upwork’s platform, avoiding bot detection while still improving efficiency. Classify your tools into two categories: "risky" (auto-submitting, scraping, or using login credentials) and "safe" (alerts, templates, or internal analytics). Disable any tools that mimic bot-like behavior. To stay ahead of potential risks, conduct a 7-day audit every quarter to identify and address new automation vulnerabilities.

Case Studies: Agencies Doing It Right

Better Lead Scoring with AI

Agencies are saving time and improving efficiency by using AI to pre-evaluate job details – like client budgets, project scope, and technology requirements – in just 10 minutes instead of the usual 1–2 hours. This streamlined process has led to proposal view rates climbing to 25–30% while cutting unnecessary connects by about 30%. By avoiding low-quality, spam-like submissions, agencies are not only saving resources but also building a more professional reputation.

Convertix.io: Ethical AI in Action

Convertix.io showcases how ethical AI can deliver real results. The platform ensures compliance with Upwork’s Terms of Service by operating exclusively through verified human activity, preventing bot detection issues that could result in account suspensions. For $299 per month, Convertix.io runs continuously, and its onboarding process takes just 1–3 days – far faster than the 1–3 weeks typically required to train a human lead generation manager.

Agencies using Convertix.io report proposal reply rates of 5–15%, outperforming the standard 5–7% achieved with human-managed workflows. The platform also allows agencies to create custom AI prompts, tailoring searches to focus on ideal jobs, preferred technologies, and specific domain expertise. Essentially, it acts as a digital lead generation manager with a faster and more precise approach.

Portfolio-Based Matching for Better Results

Portfolio-based matching is raising the bar for ethical AI by emphasizing transparency and precision in proposal generation. Instead of relying on generic templates, this method selects the most relevant portfolio items – like project titles, URLs, and tech stacks – for each job. This approach provides clients with concrete examples of an agency’s capabilities, clearly showing how past work aligns with their needs. By offering detailed, tailored proposals, agencies can move away from mass submissions and focus on landing higher-value clients.

Creating an Ethical AI Culture in Your Agency

Building a culture around ethical AI practices is key to maintaining compliance and earning trust in the long run. Here’s how you can make it happen.

Training Your Team on Ethical AI

Incorporate ethical AI principles into your onboarding process so that every new team member understands your agency’s standards from the start. But it shouldn’t stop there – ongoing education is just as important. Your team needs to stay informed about both the capabilities and the limitations of AI.

For those managing AI systems, provide specialized training. These individuals need a deeper understanding of technical and ethical considerations to detect bias and ensure accountability. Additionally, train your entire staff to avoid sending AI-generated vs. manual proposals without human review. This step is critical for catching errors or "hallucinations" that could harm your reputation. Also, teach them about data privacy risks, particularly the dangers of inputting proprietary client data or sensitive lead information into public AI tools like ChatGPT.

Use real-world examples to drive home the importance of these practices. Share lessons from your agency’s own experiences or industry case studies. For instance, if a data breach occurs due to improper AI usage, use it as a teaching moment to stress the importance of secure data handling and clear protocols for managing sensitive information.

"Ethical AI isn’t about perfection. It’s about building systems that protect your customers. Start with one principle at a time."

- Kateryna Quinn, Founder, Uplify

Equipping your team with this kind of knowledge naturally sets the stage for formalizing these practices into concrete policies.

Setting Up Internal AI Policies

Develop a detailed AI ethics policy that outlines acceptable and unacceptable practices, particularly in areas like Upwork lead generation. Be specific about what types of data can and cannot be shared with AI models to prevent leaks of proprietary information. Your policy should also mandate human review at key stages, ensure data is anonymized, and establish clear accountability measures.

Transparency with clients is another important piece of the puzzle. Use disclosure statements like "AI-recommended" or "automated suggestion" to make sure clients are aware of when AI is involved. Additionally, apply encryption and anonymization to any personal data used for training or internal AI tools.

To keep your systems in check, establish a culture of regular audits. Conduct quarterly reviews with data scientists and stakeholders to evaluate bias, improve explainability, and address any risks. Build traceability into your AI systems so that automated decisions can be audited and their underlying logic explained to both your team and your clients. As Kateryna Quinn puts it:

"Transparency isn’t weakness in AI systems. It’s your competitive advantage. Customers choose businesses they understand and trust."

- Kateryna Quinn, Founder, Uplify

With these policies in place, your agency can grow while staying true to its ethical commitments.

Scaling Your Agency Ethically

As your agency expands, maintaining ethical AI practices requires the right setup. Convertix.io offers three plans designed to align with ethical standards, no matter your agency’s size:

| Plan Name | Price | Features | Benefits |

|---|---|---|---|

| Starter | $299/mo | 300 proposals/month, portfolio matching | Ideal for small teams |

| Advanced | $599/mo | 600 proposals/month, 24/7 support | Great for growing agencies |

| Bespoke | Contact | Unlimited proposals, custom features | Tailored for larger needs |

At every stage, human-in-the-loop protocols remain essential. These ensure that AI-driven decisions align with ethical principles and allow for the correction of biased outcomes. The Bespoke plan even offers custom features tailored to your agency’s specific ethical guidelines and compliance needs, making it easier to scale responsibly.

Finally, as you grow, consider how your operations can remain efficient while minimizing environmental impact. Ethical AI isn’t just about fairness – it’s also about sustainability.

Conclusion: Moving Forward with Ethical AI

Ethical AI practices are crucial for building trust and ensuring long-term success on platforms like Upwork. Agencies that emphasize transparency, maintain human oversight, and adhere to compliance standards can significantly reduce risks like deepfake scams and data breaches while fostering stronger client relationships.

This ethical approach should inform both daily operations and strategic planning. To move forward effectively, it’s essential to balance AI personalization and automation with accountability. For instance, ensure all proposals undergo human review, encrypt sensitive data, and maintain transparency in AI-driven decisions. As the Upwork Team aptly states:

"Companies that strike the right balance between AI automation and human oversight are more likely to protect their reputation, build trust, and produce stronger outcomes."

- The Upwork Team

The legal landscape surrounding AI is shifting rapidly. Recent rulings highlight the importance of proper licensing and compliance. For example, key decisions in March 2025 and September 2025 addressed issues like AI-generated work and the use of pirated training data, underscoring the financial and reputational risks of neglecting these areas.

To start, implement small but impactful measures: conduct quarterly bias audits, clearly label AI-generated content, utilize AI tone matching for authentic communication, and maintain human oversight in all interactions. These steps not only safeguard your agency from potential pitfalls but also reinforce your reputation as a reliable partner in an increasingly automated world.

The agencies that thrive on Upwork in 2026 and beyond will be those that automate responsibly. By prioritizing ethical AI practices today, you’re laying the groundwork for a scalable, trustworthy, and future-ready business.

FAQs

How can I tell if an AI tool will violate Upwork’s automation rules?

To make sure an AI tool aligns with Upwork’s automation rules, stick to tasks like drafting proposals or organizing projects, but always include human oversight. Avoid using AI for restricted activities, such as bidding on jobs or tracking time automatically. Regularly check Upwork’s guidelines to stay informed about what’s allowed.

What’s the safest way to use AI without exposing client data?

To use AI safely while protecting client data, focus on tasks such as research and drafting. Always manually review and tailor proposals to meet specific needs. Avoid inputting sensitive client details into AI tools, and ensure your practices comply with Upwork’s policies. This method helps you stay secure and compliant while benefiting from AI’s capabilities.

How do I audit my AI workflows for bias and compliance?

To ensure AI workflows are free from bias and meet compliance standards, it’s essential to conduct regular evaluations. Focus on three key areas: fairness, policy adherence, and performance.

Start by reviewing outputs for any biased patterns or inconsistencies. This helps catch unintended issues early. Next, confirm that all processes align with Upwork’s platform policies. Compliance is non-negotiable. Additionally, manually review AI-generated content to ensure it meets ethical and quality benchmarks.

Performance metrics are another critical tool. Pay attention to response rates and client feedback to identify potential problems. These insights can guide adjustments and improvements.

Lastly, maintain open communication with clients. Personalize outputs and double-check that they align with both ethical standards and the client’s expectations. Transparency goes a long way in building trust and delivering quality results.